The Crash…

Recently, we’ve been having a Windows service hosting a WCF endpoint crashing increasingly frequently due to out of memory errors. Although the usage of the service had increased, it didn’t seem likely that the service would actually consume its entire address space in the course of legitimate operation, so I naturally assumed a memory leak.

First, I perused all static collections and looked for any possible leaks. Only one small leak was found through inspection, which I strongly doubted could be the actual cause. Another staff recommended that the SQL server might be consuming all available memory, and that an upper limit should be set. We did indeed set the limit. However, these two changes did not resolve the issue. One of my coworkers suggested investigating the crash memory dump using WinDbg.

Using WinDbg

I hadn’t used WinDbg before and getting started was, frankly, daunting. After several hours, however, I was able to get an effective flow. The first mistake I made was loading the wrong bitness of WinDbg. The application in question is 32-bit (due to its dependency on several legacy DLLs), but I was trying to use the 64-bit WinDbg. No dice (but some strange and confusing errors that slowed me down). Finally I got the right WinDbg and the right memory dump and I was ready to go.

After opening the dump, the first step is to load the reference symbols for Microsoft DLLs:

.sympath srv*http://msdl.microsoft.com/download/symbols .reload

Next, in order to enable debugging of .NET memory dumps, a specific .NET plugin, psscor, needs to be loaded. Since this is a .NET 4 app, version 4 of psscor is needed.

I heavily leaned on the article Intro to WinDbg for .NET Developers. Note in the subsequent examples references to “k:\windbg” represent the working directory where I accumulated the dump and helper files.

.load k:\windbg\psscor4.dll .symfix c:mylocalsymcache .cordll -ve -u -l

These commands will be sufficient for basic .NET debugging. But as I thrashed around for several hours exploring the dump, I found another plugin that extends psscor with additional capability. The SOSEX extension specifically includes reference tracking, which I found very helpful.

.load k:\windbg\sosex.dll !bhi

A helpful cheat sheet clearly describes all available commands at this point.

Another short article on debugging memory leaks got me on the right track. I also referenced a source describing how to access object values.

An overall view of memory usage, sorted from least total usage to most, is the first point of analysis:

!dumpheap -stat

Statistics:

MT Count TotalSize Class Name

...[many small entries omitted]

71148b08 10520 1346560 System.Data.DataColumn

735ceb9c 17895 1431600 System.Runtime.Remoting.ServerIdentity

735f0764 13218 1506528 System.Int32[]

735f2388 10785 4252704 System.Collections.Hashtable+bucket[]

735d6a0c 3313 7084876 System.Decimal[]

711493a0 52 17129584 System.Data.DataRow[]

735ef46c 32056 21923408 System.String[]

735eed0c 12115 36322560 System.Object[]

735f35fc 59441 54614724 System.Byte[]

735ee918 3079311 64983264 System.String

71149898 932 90479536 System.Data.RBTree`1+Node[[System.Data.DataRow, System.Data]][]

735eba00 5610857 134660568 System.Guid

71148a30 2821656 191872608 System.Data.DataRow

I immediately noticed that the top entry was DataRow, and several other of the top entries seemed to be related (RBTree of DataRow, DataRow[], and DataColumn). Guid is also related, since all the rows in the database (and, thus, all DataRow in memory) are keyed on Guids. The application in fact uses DataTable heavily to bridge SQL and WCF. In no case, however, should the application be holding several million rows in active memory. According to this dump, more than 2 million DataRows are accumulated in process memory.

I suspected a leak where DataRows were being retained. However, no instance of DataTable, DataColumn, nor DataRow are being retained in any obvious or explicit cache or static table.

Next step is to investigate the details. Since DataRow is the largest, I selected it to investigate further. Note the “71148a30” refers to the MT (method table) from the previous output.

!dumpheap -mt 71148a30

This run outputs thousands of entries, but visually as they flow by, you can see that they are “clustered”, suggesting a relatively small number of collections with many many rows each. Supporting this visual analysis is the “52” instances of DataRow[] in the first listing.

Address MT Size 015540a8 71148a30 68 0155412c 71148a30 68 015541b0 71148a30 68 01554234 71148a30 68 015542b8 71148a30 68 ...[thousands of rows omitted] 3ad0c110 71148a30 68 3ad0c17c 71148a30 68 3ad0fd78 71148a30 68

So we’ve confirmed that we have a variety (probably 52) DataTables in memory each with many, many rows. This latter part is unusual, since there are few places in the application which expect many, many rows. Most DataTables have 1 to 100 rows, not thousands+.

It might be useful, instead, to follow the chain of the 52 DataRow[] entries. Note the MT reference for them from the first table: 711493a0

!dumpheap -mt 711493a0 Address MT Size 015ee00c 711493a0 12 015f16e4 711493a0 524 01a6506c 711493a0 12 01c60bb4 711493a0 524 ...[couple dozen rows omitted, all size = 524] 2c276c28 711493a0 524 2c6a5278 711493a0 524 3996c578 711493a0 65548 40a98ed8 711493a0 262156 53071010 711493a0 16777228

Whoa what’s this. A single DataRow[] (that is, a single table) is taking a HUGE amount of memory. Naturally that’s a target of interest. However, the many smaller entries could be interesting as well. We can investigate both.

The first leak – many smaller objects

First, we’ll investigate the smaller, many instance.

We can use the gcroot command to find the path (or paths) from the object to the active, live object at the root of the application.

!gcroot 2c276c28

HandleTable:

00c613ec (pinned handle)

-> 024c34c8 System.Object[]

-> 01bff620 System.Collections.Generic.Dictionary`2[[System.Object, mscorlib],[System.Collections.Generic.List`1[[Microsoft.Win32.SystemEvents+SystemEventInvokeInfo, System]], mscorlib]]

-> 01bff968 System.Collections.Generic.Dictionary`2+Entry[[System.Object, mscorlib],[System.Collections.Generic.List`1[[Microsoft.Win32.SystemEvents+SystemEventInvokeInfo, System]], mscorlib]][]

-> 01c0d9f0 System.Collections.Generic.List`1[[Microsoft.Win32.SystemEvents+SystemEventInvokeInfo, System]]

-> 0dee8adc Microsoft.Win32.SystemEvents+SystemEventInvokeInfo[]

-> 2c2214ac Microsoft.Win32.SystemEvents+SystemEventInvokeInfo

-> 2c22148c Microsoft.Win32.UserPreferenceChangedEventHandler

-> 2c27b3ac System.Windows.Forms.ContextMenuStrip

-> 2c27b650 System.ComponentModel.EventHandlerList

-> 2c27c650 System.ComponentModel.EventHandlerList+ListEntry

-> 2c27c388 System.ComponentModel.EventHandlerList+ListEntry

-> 2c27c354 System.ComponentModel.EventHandlerList+ListEntry

-> 2c27c320 System.ComponentModel.EventHandlerList+ListEntry

-> 2c27c300 System.EventHandler

-> 2c27b178 Microsoft.Reporting.WinForms.ReportPanel

-> 2c27acc8 Microsoft.Reporting.WinForms.WinRSviewer

-> 2c279e64 System.Windows.Forms.Panel

-> 2c279af0 System.Windows.Forms.TableLayoutPanel

-> 2c2798a0 Microsoft.Reporting.WinForms.RVSplitContainer

-> 2c277a3c System.Windows.Forms.Panel

-> 2c2776d4 System.Windows.Forms.TableLayoutPanel

-> 2c277484 Microsoft.Reporting.WinForms.RVSplitContainer

-> 2c276e84 Microsoft.Reporting.WinForms.ReportViewer

-> 2c277114 Microsoft.Reporting.WinForms.ReportHierarchy

-> 2c277124 System.Collections.Generic.Stack`1[[Microsoft.Reporting.WinForms.ReportInfo, Microsoft.ReportViewer.WinForms]]

-> 2c21f0ac Microsoft.Reporting.WinForms.ReportInfo[]

-> 2c21efec Microsoft.Reporting.WinForms.ReportInfo

-> 2c220fc4 Microsoft.Reporting.WinForms.LocalReport

-> 2c28de40 Microsoft.Reporting.WinForms.SubreportProcessingEventHandler

-> 2c218b40 <error>

-> 2c22ce00 System.Data.DataSet

-> 2c22ce6c System.Data.DataTableCollection

-> 2c22ce90 System.Collections.ArrayList

-> 2c2398f8 System.Object[]

-> 2c22f884 System.Data.DataTable

-> 2c22fa0c System.Data.RecordManager

-> 2c276c28 System.Data.DataRow[]

That’s a lot to take in. The list goes from the bottom (the instance in question) to the top. On the way, it tells us this table is owned by a WinForm SSRS reporting component which in turn is held alive by an event handler.

You might recall this application is not a Desktop app; it is a headless Windows service. Nevertheless, we embed the Winforms SSRS component to be able to convert RDL into PDF as part of an automated process. This happens occasionally, and once the PDF is done no further work is done on the data. There is absolutely NO REASON multiple alive references should exist to this component. It’s a leak!

But why?

Looking into the code exposes the reason:

var sia = new WsbisReports.BridgeInventory.SI.SIA(); sia.PrepareReport(result, p); // ... find destFilePath sia.CreatePDF(destFilePath);

Here SIA inherits from a class which encapsulates WinForms LocalReport. What’s missing? Dispose. LocalReport is a disposable class, but my consumers never call Dispose on it. Corrective action? Make the SIA (more correctly, the base RDLReport class in the application) implement IDisposable, have it Dispose the LocalReport object, and wrap all instances in a using statement to ensure disposal.

A discussion online indicates that, in additional to disposal, several other actions might be helpful:

- report.ReleaseSandboxAppDomain();

- Unsubscribe to any SubreportProcessing events

- Clear Data Sources

- Dispose the report

The first three actions can be completed in the new Dispose method once the encapsulating class implements IDisposable.

A few large objects

There are three larger DataTable objects in this dump. We’ll look at each one. We’ll start with the smallest of the three.

!gcroot 3996c578

Thread 9e78:

0b97ef3c 7072cf80 System.ServiceModel.Channels.ServerReliableChannelBinder`1+DuplexServerReliableChannelBinder`1[[System.__Canon, mscorlib],[System.__Canon, mscorlib]].OnSend(System.__Canon, System.ServiceModel.Channels.Message, System.TimeSpan)

ebp-10: 0b97ef4c

-> 39c11654 System.ServiceModel.Security.SecurityAppliedMessage

-> 39c11158 System.ServiceModel.Dispatcher.OperationFormatter+OperationFormatterMessage

-> 39c11178 System.ServiceModel.Dispatcher.OperationFormatter+OperationFormatterMessage+OperationFormatterBodyWriter

-> 390f6220 System.Data.DataSet

-> 390f628c System.Data.DataTableCollection

-> 390f62b0 System.Collections.ArrayList

-> 3922fa2c System.Object[]

-> 3922e600 System.Data.DataTable

-> 3922e788 System.Data.RecordManager

-> 3996c578 System.Data.DataRow[]

Conclusion: This is an “in-flow” object being processed by WCF. Not a leak.

How about the medium-sized object?

!do 40a98ed8 Name: System.Data.DataRow[] MethodTable: 711493a0 EEClass: 7322f4e4 Size: 262156(0x4000c) bytes Array: Rank 1, Number of elements 65536, Type CLASS

First glance, it appears that the table has allocated an array for up to 65,536 rows. That’s a lot of rows compared to the in-memory tables normally processed. We can trace the objects life through the garbage collector.

!gcroot 40a98ed8

Thread 2668:

1531daf0 07887608 WsbisWcfService.dll!Unknown

ebp+e8: 1531dbdc

-> 3a09b9d0 System.Data.DataTable

-> 3a09bb58 System.Data.RecordManager

-> 40a98ed8 System.Data.DataRow[]

This object is alive, with a reference being held by our service.

We can first use the owning DataTable to find out the actual number of rows (65,536 is just the allocated array size).

!mdt 3a09b9d0

3a09b9d0 (System.Data.DataTable)

...[omitted rows]

nextRowID:0xad77 (System.Int64)

...[omitted rows]

0xad77 = 44,407 in decimal. So the table has about 44 thousand rows. Still a lot of rows for what the app normally does, but not inconceivably huge.

What’s the location of the hold? Since I’m new to WinDbg, I wasn’t able to figure out how to get the method name from the address, but I was able to decompile the method:

!u 07887608

Normal JIT generated code

WsbisWcfService.dll!Unknown

Begin 07886ef8, size 9bf

07886ef8 55 push ebp

07886ef9 8bec mov ebp,esp

...[omitted rows]

07886f95 ff35b4dd6002 push dword ptr ds:[260DDB4h] (" request received from ")

...[omitted rows]

07887603 e8f89149f9 call 00d20800 (WsbisClient.dll!Unknown, mdToken: 0600004d)

>>> 07887608 e8704ec86c call clr!IL_Rethrow (7450c47d)

0788760d c745e400000000 mov dword ptr [ebp-1Ch],0

...[omitted rows]

0788769d 8b15dcdd6002 mov edx,dword ptr ds:[260DDDCh] ("SYS_CHANGE_OPERATION")

...[omitted rows]

So the key thing I picked up in the giant code dump is the string constants, which let me identify the method in question. Further, I note that the instruction is a “rethrow”, so I tracked down the try-catch with a rethrow between the two string constants in the method. This lets me precisely identify where the execution pointer was in method was when the dump was created.

The method is called “GetChangeTable” and is used by the client to request a table of rows that have changed for local database synchronization purposes. However, 44,000 rows in a single request is unusually high for synchronization, which happens rather continually. Even when the client is disconnected for several days, a single batch of 44,000 is more than expected.

Let’s find out more before moving on.

Finding the data in a DataTable

In normal .NET code, we can access the data in a DataTable via an indexer on the Rows property, e.g. t.Rows[0][“column”]

However, looking at the memory dump for the DataTable, it is more difficult to find the actual data. I ended up referencing the actual .NET source code for DataRow to find out where the data is stored:

/// <devdoc>

/// <para>Gets

/// or sets all of the values for this row through an array.</para>

/// </devdoc>

public object[] ItemArray {

get {

int record = GetDefaultRecord();

_table.recordManager.VerifyRecord(record, this);

object[] values = new object[_columns.Count];

for (int i = 0; i < values.Length; i++) {

DataColumn column = _columns[i];

VerifyValueFromStorage(column, DataRowVersion.Default, column[record]);

values[i] = column[record];

}

return values;

}

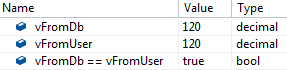

So the row's item array isn't real at all! The data is actually stored on the column.

!mdt 3a09b9d0

3a09b9d0 (System.Data.DataTable)

...[omitted rows]

columnCollection:3a09bb90 (System.Data.DataColumnCollection)

...[omitted rows]

!mdt 3a09bb90

3a09bb90 (System.Data.DataColumnCollection)

...[omitted rows]

_list:3a09bbc8 (System.Collections.ArrayList)

...[omitted rows]

!mdt -e:2 3a09bbc8

3a09bbc8 (System.Collections.ArrayList)

[0] 3a0dc77c (System.Data.DataColumn)

...[omitted rows]

_columnName:3a0dc6ec (System.String) Length=20, String="SYS_CHANGE_OPERATION"

...[omitted rows]

_storage:3a0dc908 (System.Data.Common.StringStorage)

...[omitted rows]

!mdt 3a0dc908

3a0dc908 (System.Data.Common.StringStorage)

Column:3a0dc77c (System.Data.DataColumn)

...[omitted rows]

values:40a18e98 (System.String[], Elements: 65536)

Let's stop here for a minute. We've found that the first column is named "SYS_CHANGE_OPERATION", which confirms that this table is a GetChangeRows synchronizer table (because that's the hard-coded column name in the SQL procedure that generates the change table result). We've further found the backing array of up to 65,536 values for the column. This column indicates a command code which describes what kind of operation will be done (update, insert, delete, or re-sync).

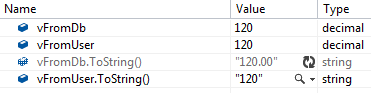

!mdt -e:2 -count:5 40a18e98 40a18e98 (System.String[], Elements: 65536) [0] 3a0dc8e0 "R" [1] 3a0dc9a8 "R" [2] 3a0dca14 "R" [3] 3a0dca80 "R" [4] 3a0dcaec "R"

The elements are command code "R", which is used when the client needs to resync the entire table. That explains why there are so many rows! The server already has a mechanism that allows it to tell the client to request a subset of rows. Maybe to save memory, the server should ALWAYS require the client to request a subset of rows when a resync is performed? That will mean smaller DataTable allocations.

Looking at the source code reveals a rather silly "bug": The server has a maximum row limit. If the row limit is exceeded, it will refuse to send the table to the client and send a message telling the client to request fewer rows. However, the server still loads the ENTIRE table from the SQL procedure, and then counts the rows using Rows.Count. This means, at least temporarily, an unnecessary very large table will exist in memory! The SQL procedure should be modified to take the max rows as a parameter, and simply return a message to the service if there are too many rows (rather than returning the whole table, having the service instantiate it in memory, and then count the rows).

The "final" twist

We've uncovered a lot so far, but there's still that monster giant DataTable to investigate.

!do 53071010 Name: System.Data.DataRow[] MethodTable: 711493a0 EEClass: 7322f4e4 Size: 16777228(0x100000c) bytes Array: Rank 1, Number of elements 4194304, Type CLASS

Wow! That's a huge table! Note that the number of rows FAR exceeds the maximum row limit (described previously) that should ever be sent! We can trace the objects life through the garbage collector.

!gcroot 53071010

Finalizer Queue:

19d71aac

-> 19d71aac System.Data.DataColumn

-> 19d6c49c System.Data.DataTable

-> 19d6c624 System.Data.RecordManager

-> 53071010 System.Data.DataRow[]

Warning: These roots are from finalizable objects that are not yet ready for finalization.

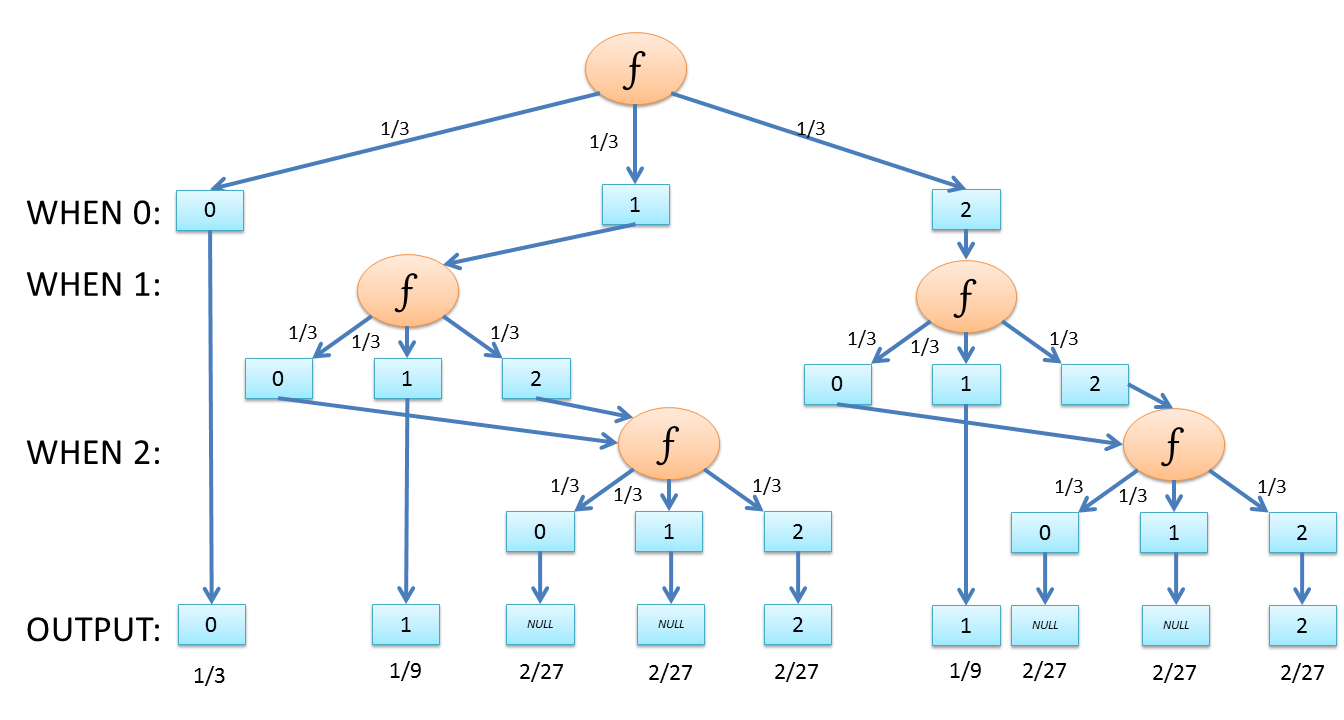

Wait, what? I had always assumed objects had two states: alive (active reference) or ready for garbage collection. It turns out there is a third state: in the finalizer queue. Objects which have a finalizer are placed on the finalizer queue. The object must be finalized before it is eligible for garbage collection, even if no references exist. The finalizer, in turn, runs through the queue on a single thread in the background. Discussion on SO.

Generally, finalization queue is avoided by having the Dispose method call SuppressFinalize, exempting the object from the finalizer queue.

But disposing is only used when an object incurs unmanaged resources. Why is it happening here, for a DataTable?

According to one discussion:

The Dispose method in DataSet exists ONLY because of side effect of inheritance-- in other words, it doesn't actually do anything useful in the finalization. The class DataSet inherits from "System.ComponentModel.MarshalByValueCompenent" which implements the IDisposable interface because it is a component.

Great. So DataTable's have a finalizer, which means they get stuck in the finalization queue for a while before the garbage collector can dispose of them. But wait! When we return a DataTable via WCF, WCF will call dispose on both parameters that are passed in and returned parameters. So how can this be explained?

Looking at the service code, we already learned that when a too-large table is created, it is instantiated in memory and the rows counted. Then, if there are too many rows, the table is NOT returned via WCF to the client; instead, a message telling the client to "request fewer rows" is sent. What happens to the DataTable (the one with TONS of rows)? It simply goes out of scope. No Dispose is ever called. That's why the large table is waiting on the finalizer queue, blocking lots of memory.

This problem could be avoided by implementing the SQL-side row counting (as described above), and also by manually calling Dispose() if the service decides it will not be returning the table to the client.

Conclusions

Garbage collection isn't magic. Some actions should be taken to ensure long-running applications and services don't leak memory. WinDbg is an awesome tool for uncovering memory leaks.

Planned changes for this particular application. Some of these might seem obvious in hindsight, but at the time, I just assumed the overhead wouldn't be a problem.

- In RDL handling

- report.ReleaseSandboxAppDomain();

- Unsubscribe to any SubreportProcessing events

- Clear Data Sources

- Dispose the report

- In GetChangeTable

- The server should ALWAYS require the client to request a subset of rows when a resync is performed

- The SQL procedure should be modified to take the max rows as a parameter, and simply return a message to the service if there are too many rows (rather than returning the whole table, having the service instantiate it in memory, and then count the rows)

- If the service generates a DataTable that it decides not to return to the client, Dispose() should be called

- When DataTable is placed into a larger object for WCF serialization, that object should be marked with IDisposable and should, on Dispose, dispose the DataTable